3 Convincing Reasons to take Help of Digital Agency

It is true that these days, behind every successful company there is a role of the digital agency. It is important that you make your online presence powerful, effective and influential. You have to be careful about how you sound and look in the industry. It might interest you that most of the people to draw an idea about a company or business through their online presence.

It is true that these days, behind every successful company there is a role of the digital agency. It is important that you make your online presence powerful, effective and influential. You have to be careful about how you sound and look in the industry. It might interest you that most of the people to draw an idea about a company or business through their online presence.

Take Professional Help

One thing that you have to underline here is you should take professional help. You cannot maintain and manage your online presence and platforms unless you have full-time professionals to take care of it. If you feel that your staff members would take care of it then that would be a big flop for you. It is better you take help of professionals like Digital agency and experts who give all their time and efforts to your organization. There are some reasons that you should consider professionals and these are like:

- Access the Needed Skills

To build an in-house team to manage the entirety of your digital marketing tasks and efforts is a challenge for many businesses. The skills your business needs are either tough to come by or too pricey. Adding to this, it would not be financially feasible to recruit someone for a full or even part-time placement in case you don’t really need their skills continually and consistently. Of course, the campaigns that you run will modify at diverse times of the year. Here what you can do is you can outsource your digital marketing tasks. These digital agencies have a core team of professionals, technicians, and designers who get you the best for your digital presence.

- Deadlines are Met with Ease

- Get Fresh Perspectives

Also Read

Benefits of Using Services of Digital Marketing Agencies

SEO in 2019, Which Practices You Should Avoid and Why

Public Speaking Tips for Introverts

Verbal communication has never been by my strength. I’m an introvert and generally an awkward human being. So you might be surprised to learn that each …

Read More →Digital Marketing News: Influencer & Data-Driven Marketing Stats, B2B Social Usage, & Twitter’s New Video Analytics

The post Digital Marketing News: Influencer & Data-Driven Marketing Stats, B2B Social Usage, & Twitter’s New Video Analytics appeared first on Online Marketing Blog – TopRank®.

Log File Analysis For SEO

Log file analysis is a lost art. But it can save your SEO butt. This post is half-story, half-tutorial about how web server log files helped solve a problem, how we analyzed them, and what we found. Read on, and you’ll learn to use grep plus Screaming Frog to saw through logs and do some serious, old school organic search magic (Saw. Logs. Get it?!!! I’m allowed two dad jokes per day. That’s one).

If you don’t know what a log file is or why they matter, read What Is A Log File? first.

The Problem

We worked with a client who rebuilt their site. Organic traffic plunged and didn’t recover.

The site was gigantic: Millions of pages. We figured Google Search Console would explode with dire warnings. Nope. GSC reported that everything was fine. Bing Webmaster Tools said the same. A site crawl might help but would take a long time.

Phooey.

We needed to see how Googlebot, BingBot, and others were hitting our client’s site. Time for some log file analysis.

This tutorial requires command-line-fu. On Linux or OS X, open Terminal. On a PC, use a utility like cmder.net.

Tools We Used

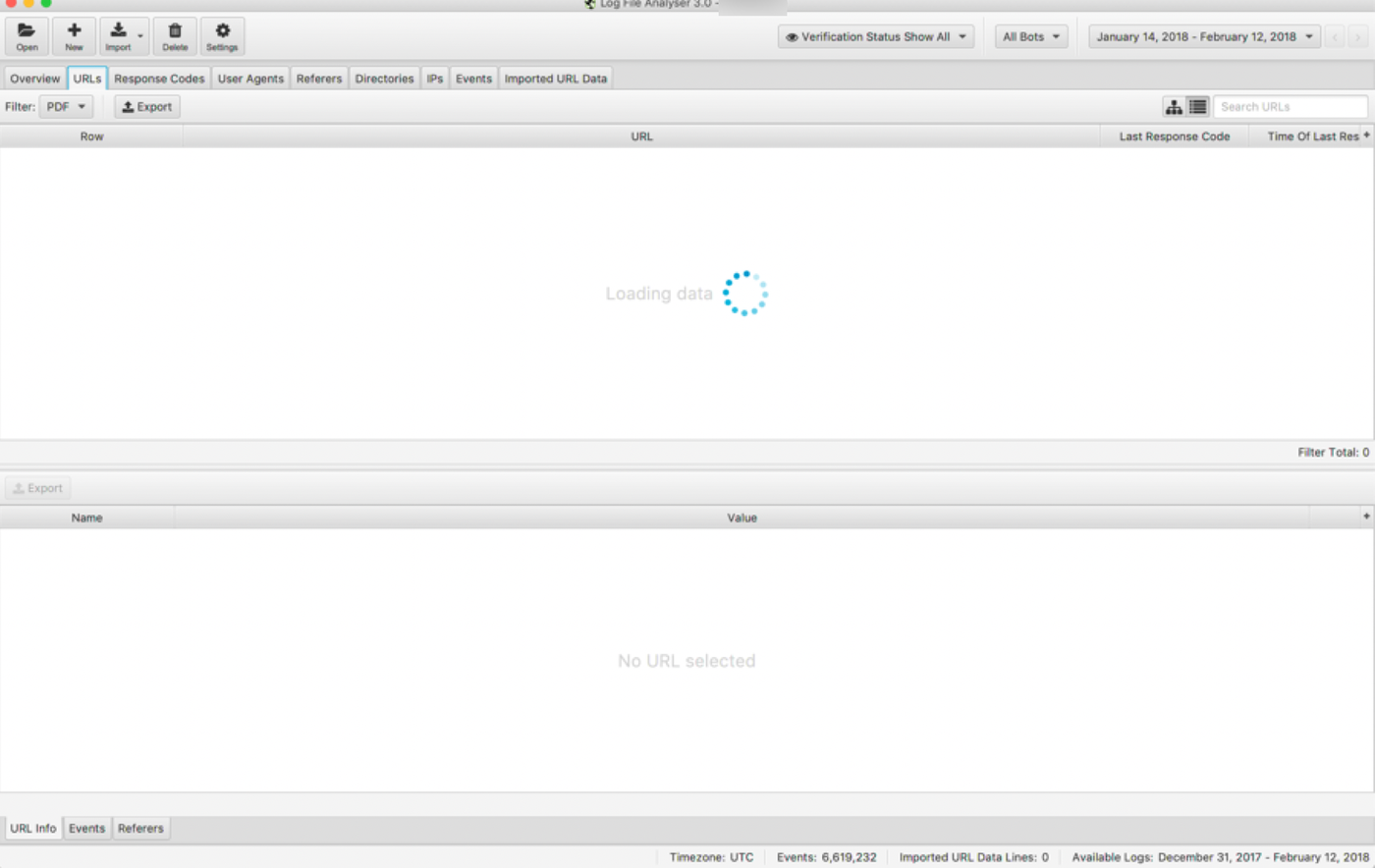

My favorite log analysis tool is Screaming Frog Log File Analyser. It’s inexpensive, easy to learn, and has more than enough oomph for complex tasks.

But our client’s log file snapshot was over 20 gigabytes of data. Screaming Frog didn’t like that at all:

screaming-frog-log-choke-compressed

Screaming Frog says « WTF? »

Understandable. We needed to reduce the file size, first. Time for some grep.

Get A Grep

OK, that’s dad joke number two. I’ll stop.

The full, 20-gig log file included browser and bot traffic. All we needed was the bots. How do you filter a mongobulous log file?

- Open it in Excel (hahahahahahaahahahah)

- Import it into a database (maybe, but egads)

- Open it in a text editor (and watch your laptop melt to slag)

- Use a zippy, command line filtering program. That’s grep

“grep” stands for “global regular expression print.” Grep’s parents really didn’t like it. So we all use the acronym, instead. Grep lets you sift through large text files, searching for lines that contain specific strings. Here’s the important part: It does all that without opening the file. Your computer can process a lot more data if it doesn’t have to show it to you. So grep is super-speedy.

Here’s the syntax for a typical grep command:

grep [options][thing to find] [files to search for the thing]

Here’s an example: It searches every file that ends in “*.log” in the current folder, looking for lines that include “Googlebot,” then writes those lines to a file called botsonly.txt:

grep -h -i ‘Googlebot’ *.log >> botsonly.txt

The -h means “Don’t record the name of the file where you found this text.” We want a standard log file. Adding the filename at the start of every line would mess that up.

The -i means “ignore case.”

Googlebot is the string to find.

*.log says “search every file in this folder that ends with .log”

The >> botsonly.txt isn’t a grep command. It’s a little Linux trick. >> writes the output of a command to a file instead of the screen, in this case to botsonly.txt.

For this client, we wanted to grab multiple bots: Google, Bing, Baidu, DuckDuckBot, and Yandex. So we added -e. That lets us search for multiple strings:

grep -h -i -e 'Googlebot' -e 'Bingbot' -e 'Baiduspider' -e 'DuckDuckBot' -e 'YandexBot' *.log >> botsonly.txt

Every good LINUX nerd out there just choked and spat Mountain Dew on their keyboards. You can replace this hackery with some terribly elegant regular expression that accomplishes the same thing with fewer characters. I am not elegant.

Breaking down the full command:

h: Leaves out filenames

i: Case insensitive (I’m too lazy to figure out the case for each bot)

e: Filter for multiple factors, one factor after each instance of -e

>>: Write the results to a file

Bots crawl non-page resources, too, like javascript. I didn’t need those, so I filtered them out:

grep -h -v *.js botsonly.txt >> botsnojs.txt

-v inverts the match, finding all lines that do not include the search string. So, the grep command above searched botsonly.txt and wrote all lines that did not include .js to a new, even smaller file, called botsnojs.txt.

Result: A Smaller Log File

I started with a 20-gigabyte log file that contained a bazillion lines.

After a few minutes, I had a one-gigabyte log file with under a million lines. Log file analysis step one: Complete.

Analyze in Screaming Frog

Time for Screaming Frog Log File Analyser. Note that this is not their SEO Spider. It’s a different tool.

I opened Screaming Frog, then drag-and-dropped the log file. Poof.

If your log file uses relative addresses—if it doesn’t have your domain name for each request—then Screaming Frog prompts you to enter your site URL.

What We Found

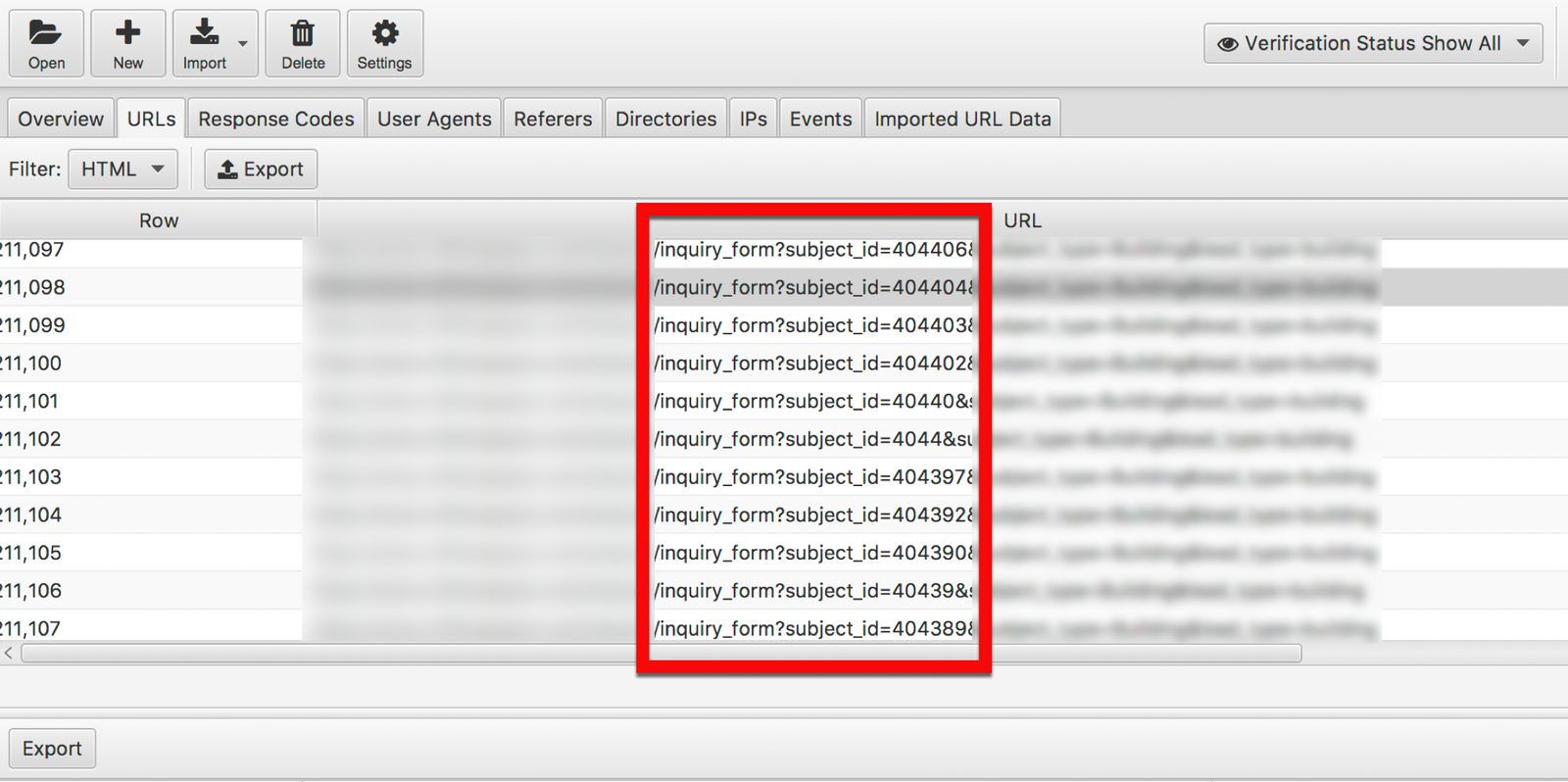

Google was going bonkers. Why? Every page on the client’s site had a link to an inquiry form.

A teeny link. A link with ‘x’ as the anchor text, actually, because it was a leftover from the old code. The link pointed at:

inquiry_form?subject_id=[id]

If you’re an SEO, you just cringed. It’s duplicate content hell: Hundreds of thousands of pages, all pointing at the same inquiry form, all at unique URLs. And Google saw them all:

log-files-inquiry-form-compressed

Inquiry Forms Run Amok

60% of Googlebot events hit inquiry_form?subject_id= pages. The client’s site was burning crawl budget.

The Fix(es): Why Log Files Matter

First, we wanted to delete the links. That couldn’t happen. Then, we wanted to change all inquiry links to use fragments:

inquiry_form#subject_id=[id]

Google ignores everything after the ‘#.’ Problem solved!

Nope. The development team was slammed. So we tried a few less-than-ideal quick fixes:

- robots.txt

- meta robots

We tried rel canonicalNo, we didn’t, because rel canonical was going to work about as well as trying to pee through a Cheerio in a hurricane (any parents out there know whereof I speak).

Each time, we waited a few days, got a new log file snippet, filtered it, and analyzed it.

We expected Googlebot to follow the various robots directives. It didn’t. Google kept cheerfully crawling every inquiry_form URL, expending crawl budget, and ignoring 50% of our client’s site.

Thanks to the logs, though, we were able to quickly analyze bot behavior and know whether a fix was working. We didn’t have to wait weeks for improvements (or not) in organic traffic or indexation data in Google Search Console.

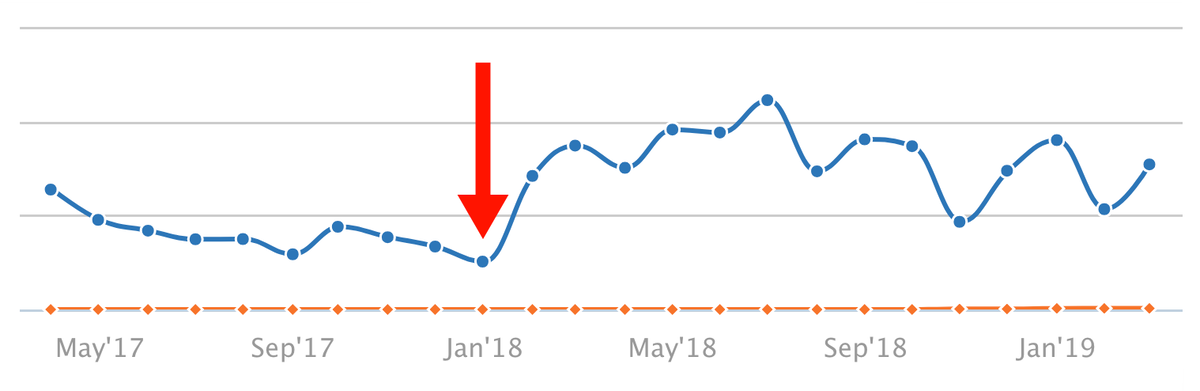

A Happy Ending

The logs showed that quick fixes weren’t fixing anything. If we were going to resolve this problem, we had to switch to URL fragments. Our analysis made a stronger case. The client raised the priority of this recommendation. The development team got the resources they needed and changed to fragments.

That was in January:

fragments-implemented-compressed

Immediate Lift From URL Fragments

These results are bragworthy. But the real story is the log files. They let us do faster, more accurate analysis, diagnose the problem, and then test solutions far faster than otherwise possible.

If you think your site has a crawl issue, look at the logs. Waste no time. You can thank me later.

I nerd out about this all the time. If you have a question, leave it below in the comments, or hit me up at @portentint.

Or, if you want to make me feel important, reach out on LinkedIn.

The post Log File Analysis For SEO appeared first on Portent.

Google’s Latest Broad Core Ranking Update: Florida 2

Florida 2 Algorithm Update

What’s Going On?

On March 12th, Google released what being referred to as the Florida 2 algorithm update. Website owners are already noticing significant shifts across their keyword rankings. While Google’s algorithm updates vary in terms of how often they receive broad notice, the Florida 2 update is one that every marketer needs to be paying close attention to.

Who Was Impacted by the Florida 2 Algorithm Update?

Google makes several broad ranking algorithm updates each year, but only confirms updates that result in widespread impact. Google did confirm Florida 2, and there are SEOs out there already calling it the “biggest update [Google has made] in years.” Unlike last August’s Medic Update, Florida 2 isn’t targeting a specific niche or vertical, which means the entire search community needs to be paying attention as we try to better understand the changes Google is making to its search algorithm.

While it’s still too early for our team to pinpoint what exactly is being impacted by Florida 2, we’re going to keep a very close eye on where things fall out over the next several days (and weeks).

Here’s what we’ve seen so far:

- Indiscriminate swings in site traffic & ranking, with some websites reporting zero traffic after the update.

- Evidence of traffic increases for site owners who are prioritizing quality content and page speed.

- A worldwide impact – this is not a niche specific or region specific update.

- Potential adjustments in how Google is interpreting particular search queries.

- Backlink quality possibly being a very important factor.

- Short term keyword ranking changes (declines in ranking that then back to “normal” after a few hours).

My Rankings Took a Hit. What Can I Do?

In short? Nothing. But don’t panic.

As with any Google algorithm update, websites will see increases or declines in their rankings. There is no quick fix for sites or web pages that experience negative results from the update; don’t make the mistake of aggressively changing aspects of your site without fully understanding the broader impact those changes will have. If you are being negatively impacted by Florida 2 (or any other algorithm update), your best bet is continuing to focus on offering the best content you can, as that is what Google always seeks to reward.

For advice on how to produce great content, a good starting point is to review Google’s Search Quality Guidelines. For recommendations on copywriting tips and strategies check out the copywriting section of the Portent blog.

We’ll continue to update this post as we gather more information.

The post Google’s Latest Broad Core Ranking Update: Florida 2 appeared first on Portent.

5 MISTAKES Newbies Make with Facebook Ads – 2019

5 MISTAKES Newbies Make with Facebook Ads – 2019 Grab my FREE Facebook Ads Mini Course https://www.yoursocialsystem.com/fb-ads Over the last 3.5 …

Read More →The Scoop on Using Bots for Social Media Marketing

MarketingMonth: Re-visit Eazl’s Growth Hacking Masterclass for all new content on bots, digital PR in 2017, and more or get lifetime access for $14 at …

Read More →The Growth Mindset Meditation

The Growth Mindset Meditation: Built on Research, You Can Use this to Help Cultivate a Positive Mindset as You Face Challenges ❃ The Growth Mindset has …

Read More →Brand Update : Maruti Attempts Channel Differentiation using Nexa and Arena

How To Create A Facebook Live Video For Beginners (2019) – Facebook Live Video Tutorial

How To Create A Facebook Live Video For Beginners (2019) – Facebook Live Video Tutorial Subscribe so you never miss another video https://goo.gl/xqijqT …

Read More →